What happened

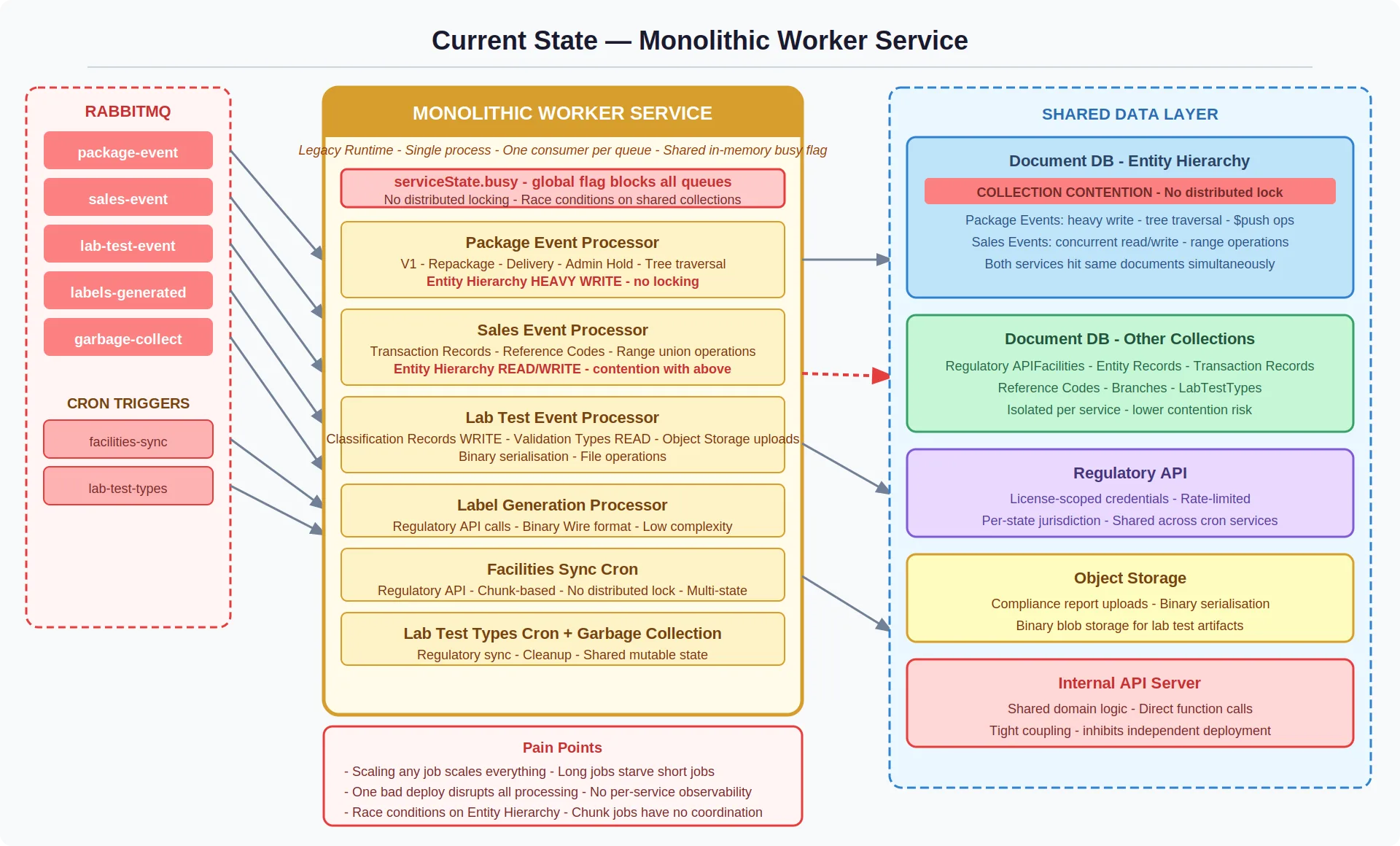

The platform's background processing ran through a single monolithic worker service — six distinct event-driven workloads sharing one process, one deployment pipeline, and one in-memory busy flag that enforced sequential message processing.

This was a deliberate early-stage tradeoff. One service meant one thing to deploy, one thing to monitor, and minimal infrastructure overhead. It worked — until it didn't.

The scaling ceiling surfaced through three business-visible symptoms. Customers experienced processing delays during high-volume periods because long-running ingestion batches blocked every other job type in the queue. The operations team couldn't deploy a fix to one domain without risking all six. And the engineering team identified a data integrity risk — two high-throughput processors writing to the same database collection with no coordination, a race condition masked by the single-process constraint that would become catastrophic the moment we tried to scale horizontally.

Decision Point

This architecture had become a constraint on the business, not just on the engineering team. We couldn't scale capacity for one workload without scaling everything. We couldn't deploy with confidence. And we couldn't isolate failures — a problem in cleanup processing was indistinguishable from the primary data pipeline without manually reading raw logs.

How it was addressed

The first leadership decision was framing the problem correctly. This wasn't a refactoring exercise — it was a risk mitigation investment. The system processed compliance-critical data on strict SLAs. The question wasn't whether to decompose, but how to do it without introducing more risk than we were retiring.

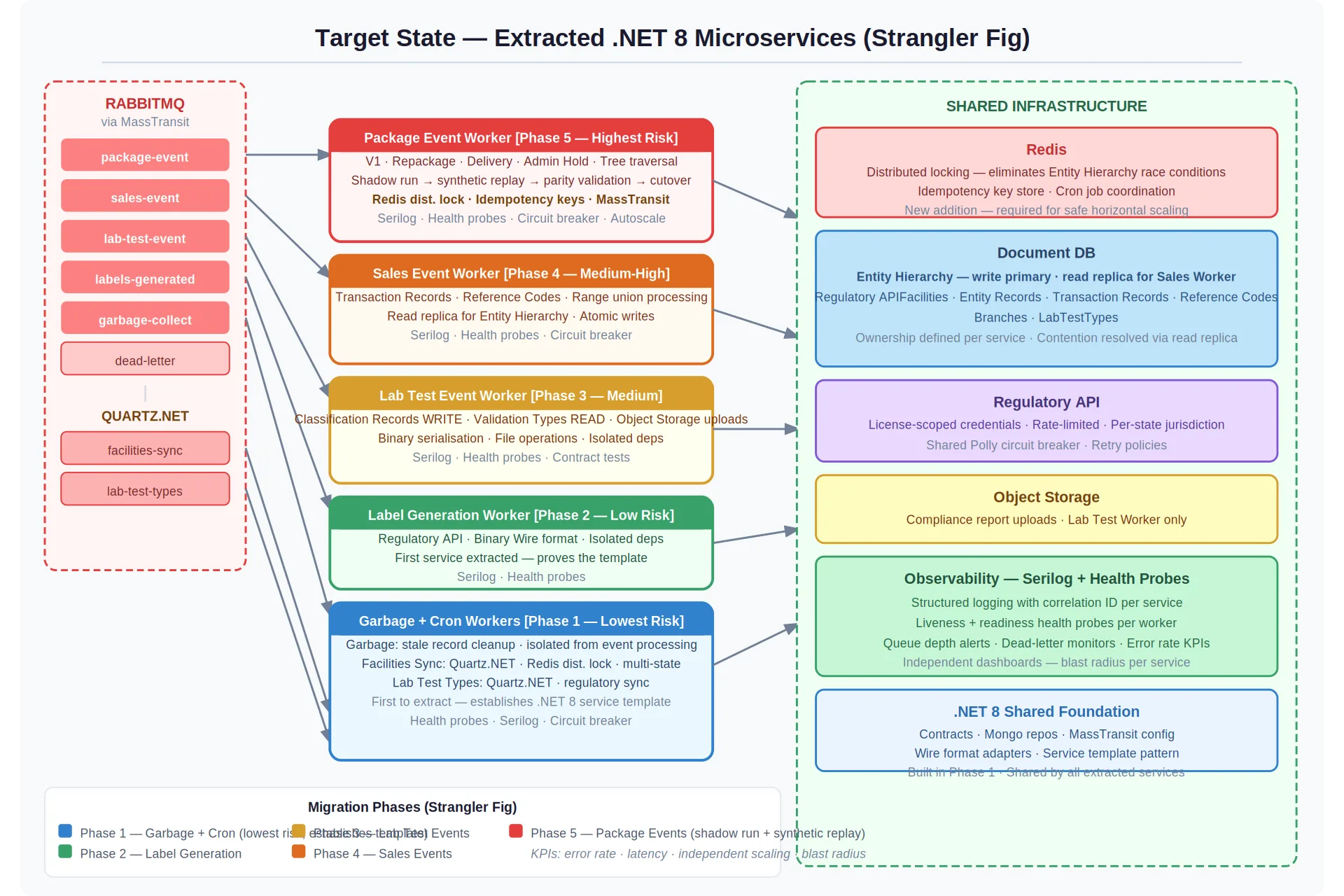

We chose the Strangler Fig pattern — the monolithic worker stays live throughout, with traffic routed to new services one event boundary at a time. Every phase has an explicit rollback path. No big-bang cutover, no downtime windows.

Sequence by risk, not by convenience. It would have been tempting to start with the most painful workload. Instead, we started with the lowest-risk services — scheduled syncs and cleanup tasks. This validated the new service template, deployment pipeline, and autoscaling in production before anything customer-facing was touched. The team built confidence through small, reversible wins.

Resolve shared state before splitting ownership. The database collection contention was the single highest-risk item. Extracting services without solving the coordination problem first would have turned a single-process race condition into a distributed one. We required Redis distributed locking and read replica routing to be validated in production before the dependent service could be extracted. This blocked the timeline but protected the migration's integrity.

Validate the hardest migration with evidence, not assumptions. The primary ingestion worker decoded a binary payload format tightly coupled to the legacy runtime. A mismatch would silently corrupt audit data. We mandated a shadow run — the new service processing in parallel with the legacy worker, comparing output byte-for-byte, with cutover gated on 100% parity. This added weeks but eliminated the highest-consequence risk.

The solution

The target architecture replaces the single worker with five independent .NET 8 Worker Services. Each owns its own message queue, container image, CI/CD pipeline, scaling profile, and failure domain.

Phase 0 established the shared foundation — solution structure, message contracts, logging standards, health probes — so every subsequent extraction started from a proven template.

Phase 1 extracted the lowest-risk workloads to validate the infrastructure pattern in production with real traffic before any high-stakes services were in play.

Phase 2 extracted three mid-complexity services in dependency order — fewest dependencies first, shared-state dependencies last (only after contention was resolved).

Phase 3 tackled the primary ingestion worker with shadow run validation, performance budgets, and defined pause criteria.

Phase 4 disabled the monolith's consumers, ran a multi-week soak period, and decommissioned the legacy service.

Result

Independent scaling per workload. Zero-blast-radius deployments. Per-service observability with structured logging and dead-letter queue alerting. The shared collection race condition — the system's most dangerous latent defect — eliminated through Redis locking and read replica routing.

Takeaways

1

Frame decomposition as risk management, not refactoring. The business case wasn't "cleaner code" — it was deployability, scalability, and data integrity. Framing it as risk mitigation secured the investment and set the right expectations for timeline and sequencing.

2

Sequence by risk, earn trust through execution. Starting with lowest-risk extraction and ending with highest gave the team and stakeholders compounding confidence at each phase. By the time we reached the hardest migration, the playbook was proven.

3

Block the timeline to protect the migration. Refusing to extract the dependent service until coordination was solved, and refusing to cut over the ingestion worker until the shadow run confirmed parity, were the two decisions that kept this migration from creating more problems than it solved.